This Week In Matrix 2018-09-07

2018-09-08 — This Week in Matrix — Matthew HodgsonHi all,

Ben's away today, so this TWIM is brought to you mainly in association with Cadair's TWIMbot!

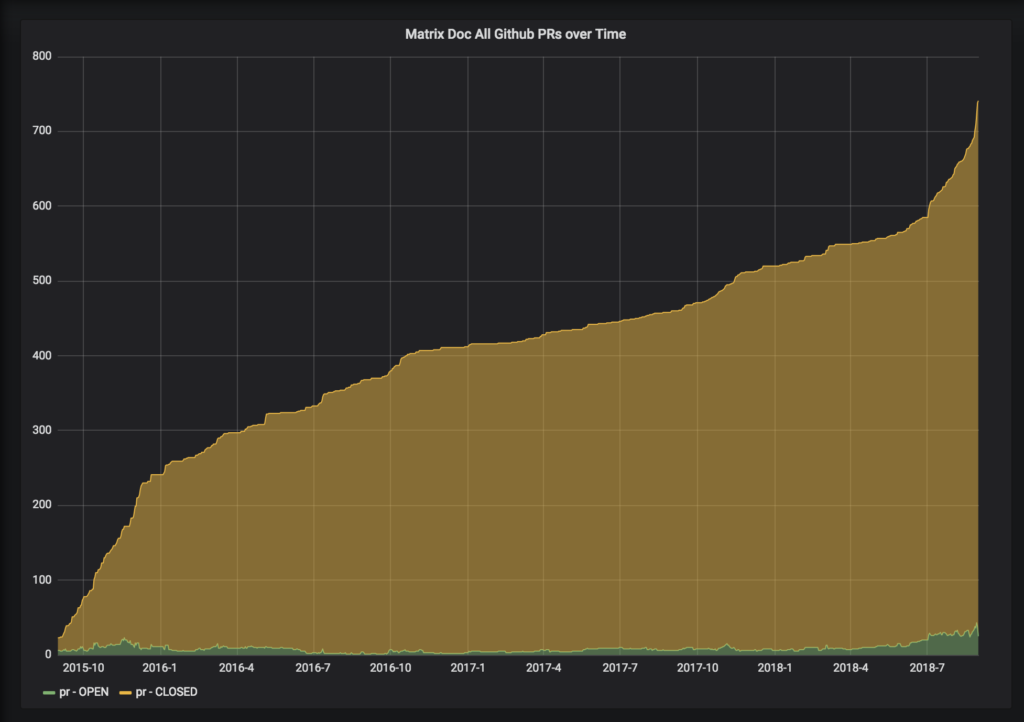

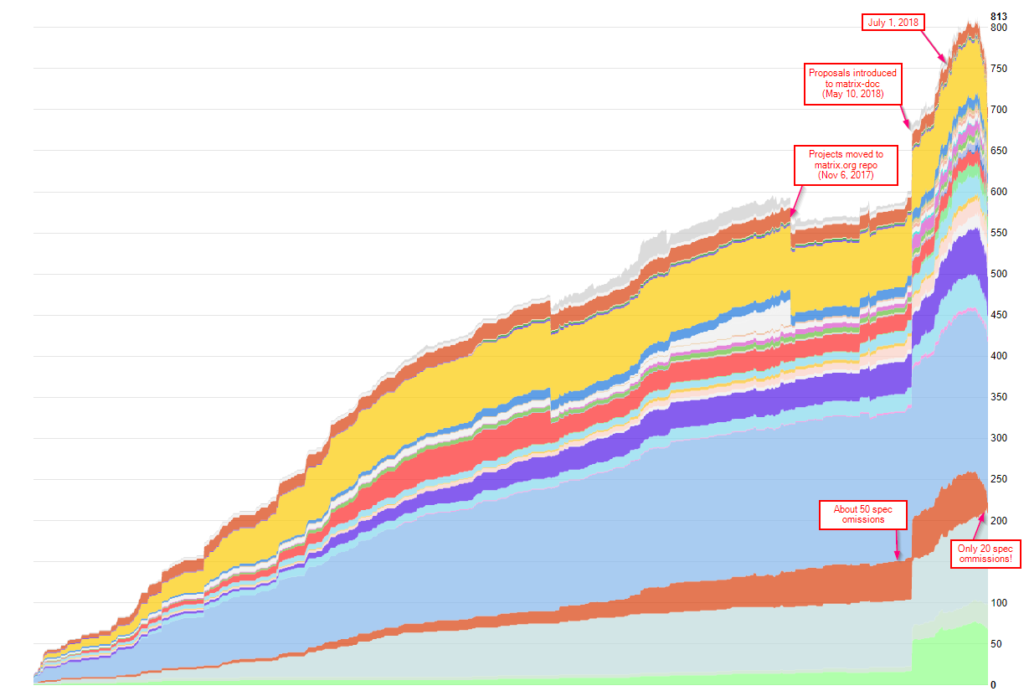

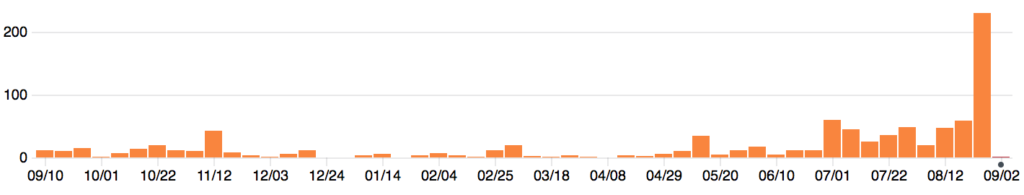

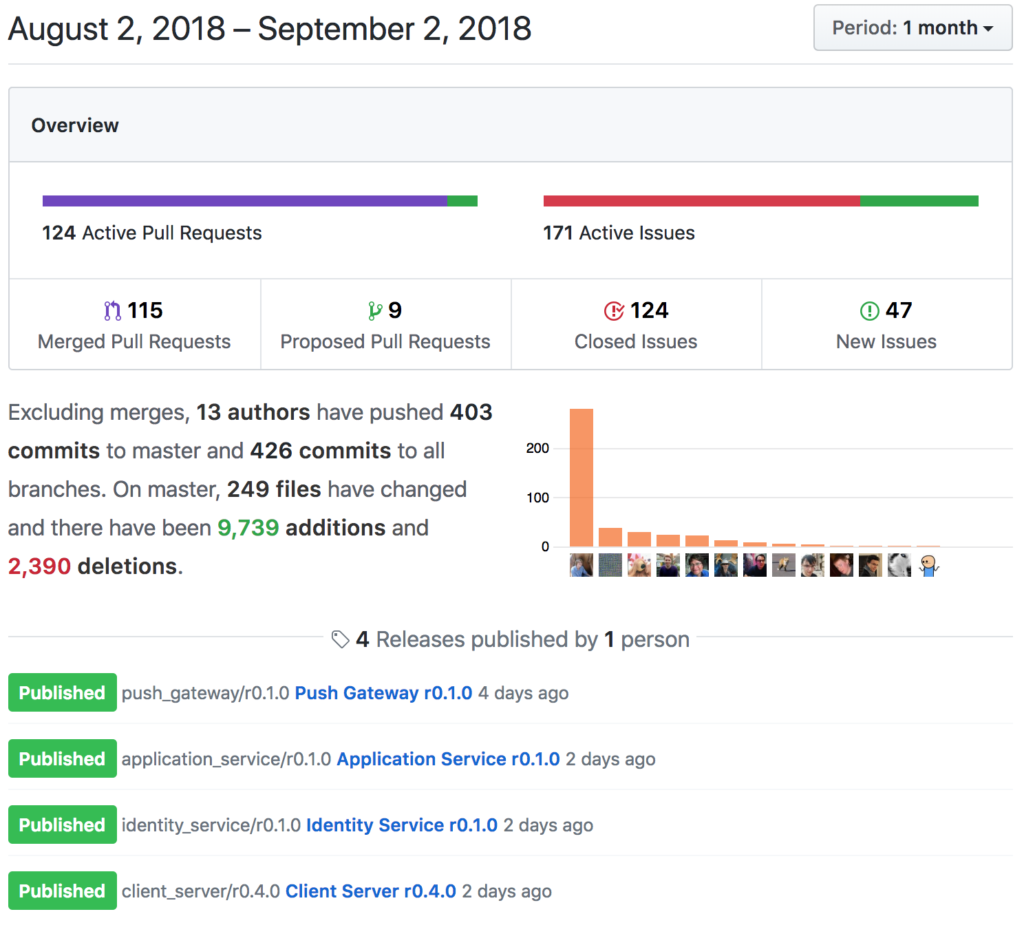

🔗Spec Activity

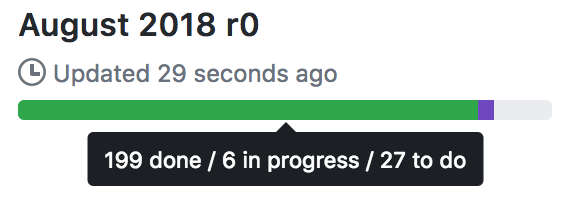

Since last week's sprint to get the new spec releases out, focus on the core team has shifted exclusively to the remaining stuff needed to cut the first stable release for the Server-Server API. In practice this means fleshing out the MSCs in flight and implementing them - work has progressed on both improving auth rules, switching event IDs to be hashes and others. Whilst implementing this in Synapse we're also doing a complete audit and overhaul of the current federation code, hence the 0.33.3.1 security release this week.

Meanwhile, in the community, ma1uta reports:

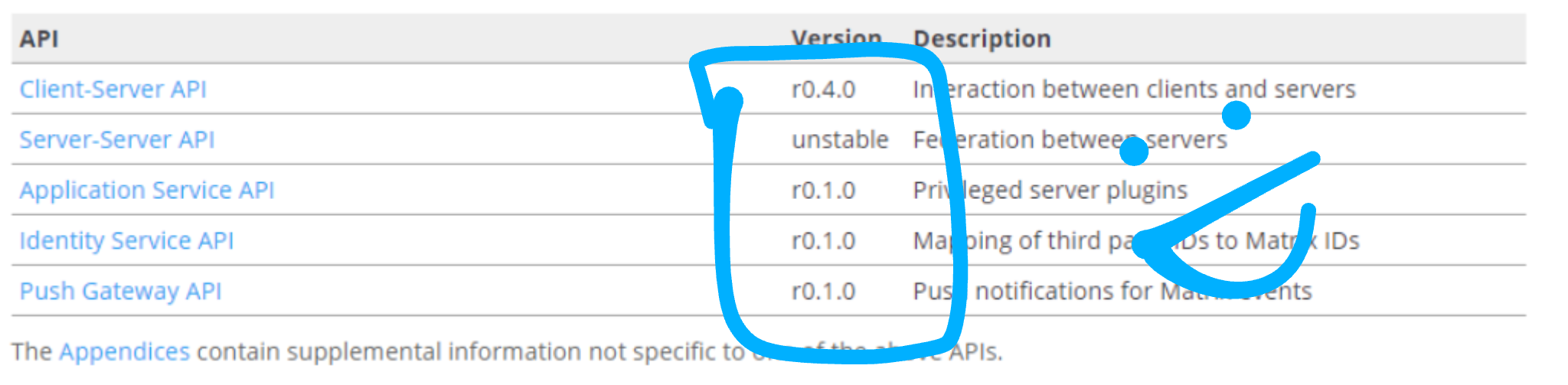

I am working on the jeon (java matrix api) to update it to the latest stable release. Also I changed versions of api to form

rX.Y.Z-NwhererX.Y.Zis a API version andNis a library version within API. So, I have prepared Push API (r0.1.0-1), Identity API (r0.1.0-1) and Appservice API (r0.1.0-1) for the first release and current updating the C2S API to the r0.4.0 version.

🔗XMPP Bridging

Are you in the market for a Matrix-XMPP bridge? When I say "market", I mean it because this week we have two announcements for bridging to XMPP! You can choose whether you'd prefer your bridge to be implemented as a puppet, or a bot.

Ma1uta has a new version of his Matrix-Xmpp bridge:

It is a double-puppet bridge which can connects the Matrix and XMPP ecosystems. Just invite the

@_xmpp_master:ru-matrix.organd tell him:@_xmpp_master: connect [email protected]to connect current room with the specified conference.

You can ask about this bridge in the #matrix-jabber-java-bridge:ru-matrix.org room.

Currently supports only conferences and onlym.textmessages. 1:1 conversations and other message types will be later.

maze appeared this week and announced MxBridge, a new Matrix-XMPP bridge:

It works as a bot, so it is non-puppeting. Rooms can be mapped dynamically by the bot administrator(s). There is no support for 1-1 chats (yet). MxBridge is written as a multi-process application in Elixir and it should scale quite well (but don't tie me down on it ;)). https://github.com/djmaze/mxbridge

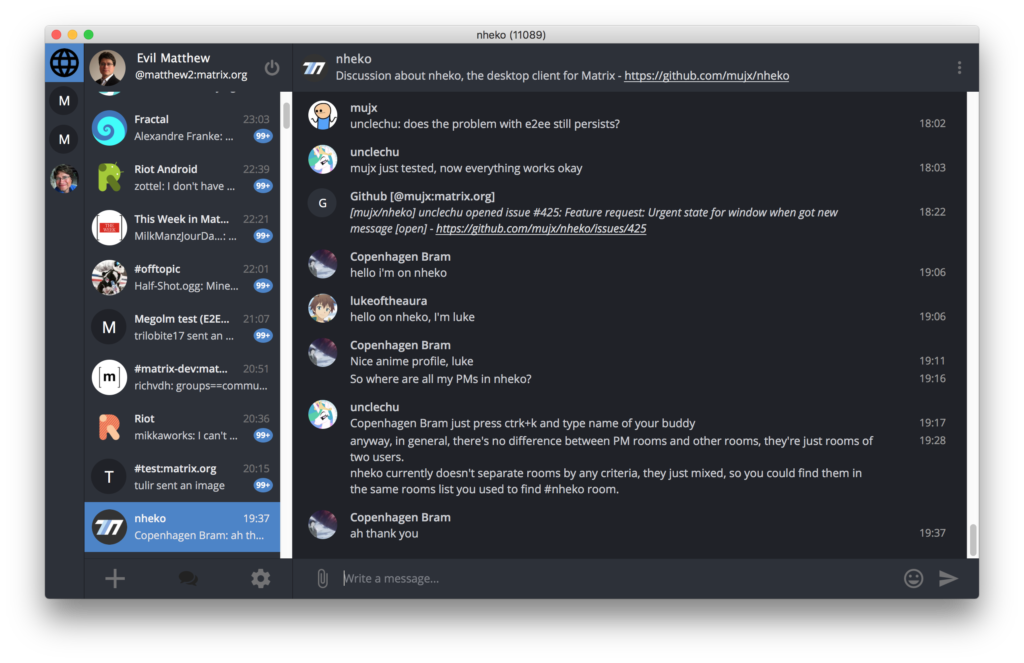

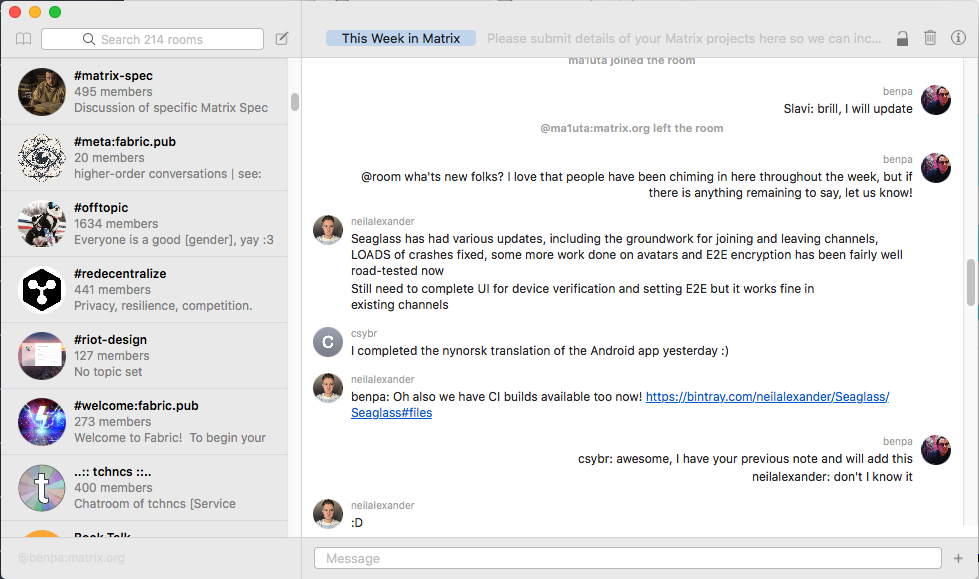

🔗Seaglass

Neil powers onwards with Seaglass, with updates this week including:

- Displaying stickers

- Lazy-loading room history on startup to help with performance

- Scrollback support (both forwards and backwards)

- Support for Matthew's Account (aka retries on initial sync for those of us with massive initial syncs, and general perf improvements to nicely support >2000 rooms)

- Better avatar support & cosmetics on macOS Mojave

- Encryption verification support, device blacklisting and message information

- Ability to turn encryption on in rooms

- Responding to encryption being turned on in rooms

- Paranoid mode for encryption (only send to verified devices)

- Invitation support (both in UI and /invite)

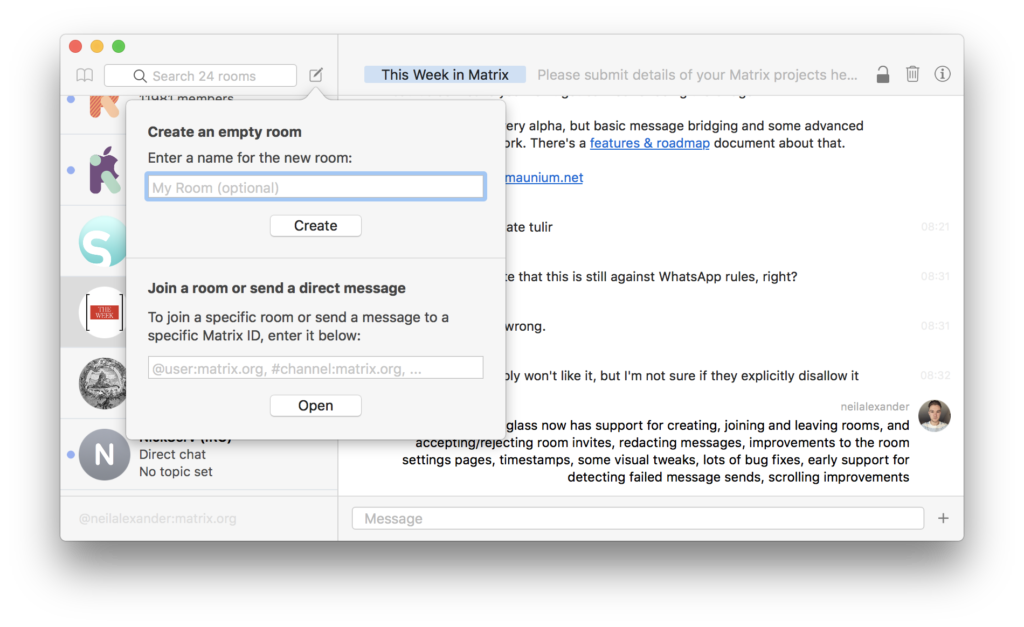

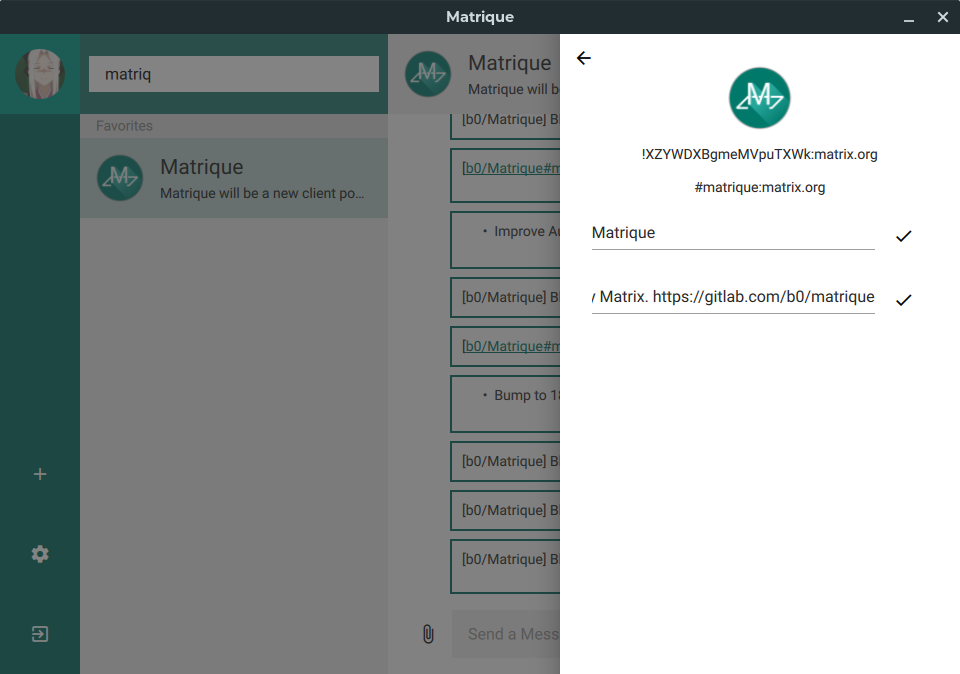

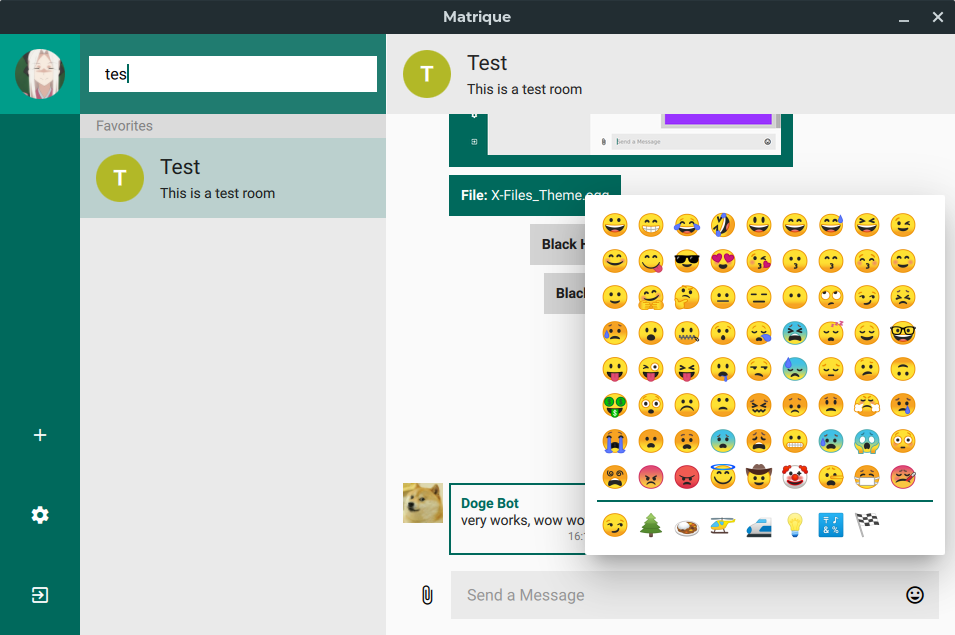

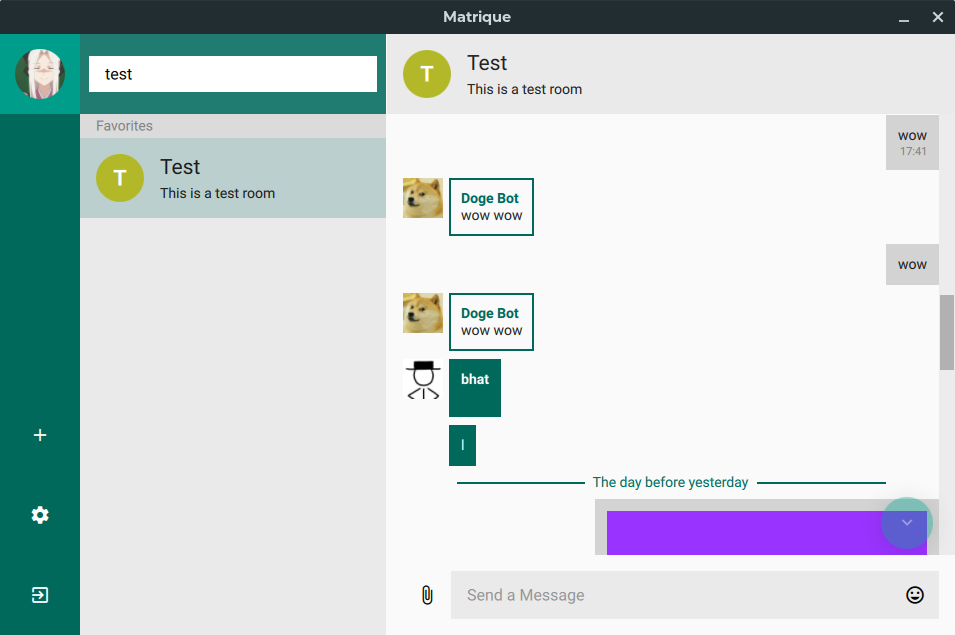

🔗Matrique

Blackhat announces that Matrique's new design is almost done, along with GNU/Linux, MacOS and Windows nightly build!

🔗

🔗Fractal

Alexandre Franke says:

Fractal 3.30 got release alongside the rest of GNOME. It includes a bunch of new and updated translations, and redacted messages are now hidden.

Meanwhile, hidden in this screenshot, uhoreg noted that E2E plans are progressing...

🔗Riot

Bruno has been hacking away on Riot/Web squashing the remaining Lazy Loading Members defects and various related optimisations and fixes. We also released Riot/Web 0.16.3 as a fairly minor point release (which unfortunately has a regression with DM avatars, which is fixed in 0.16.4, for which a first RC was cut a few hours ago and should be released on Monday). Meanwhile the first cut of Lazy Loading also got implemented on Android as well. Both are hidden behind labs flags, but we're almost at a point where we can turn it on now! Otherwise, the Riot team has got sucked into working on commercial Matrix stuff, for better or worse (all shall be revealed shortly though!)

🔗Construct

Jason has been working heavily on Construct, and has new test users. Construct is able to federate with Synapse and the rest of the Matrix ecosystem. mujx has created a docker for Construct which streamlines its deployment.

Construct development is still occurring here https://github.com/jevolk/charybdis but we are now significantly closer to pushing the first release to https://github.com/matrix-construct. Also feel free to stop by in #test:zemos.net / #zemos-test:matrix.org as well -- a room hosted by Construct, of course.

tulir has now deployed using the standalone install instructions on a very small LXC VM using ZFS. Unfortunately ZFS does not support O_DIRECT (direct disk IO) which is how Construct achieves maximum performance using concurrent reads. This is not a problem though when using an SSD or for personal deployments. I'll be posting more about how Construct hacked RocksDB to use direct IO, which can get the most out of your hardware with multiple requests in-flight (even with an SSD).

🔗Synapse

Work was split this week into spec/security work, with the critical update for 0.33.3.1 - if you haven't upgraded, please do so immediately.

Otherwise, Hawkowl has been on a mission to finish the Python 3 port, which is now almost merged. Testers should probably wait until it fully merges to the develop branch and we'll yell about it then, but impatient adrenaline enthusiasts may want to check out the hawkowl/py3-3 branch (although it may explode in your face, mangle your DB and format your cat, and probably misses lots of recent important PRs like the 0.33.3.1 stuff). However, i've been running a variant on some servers for the last few days without problems - and it seems (placebo effect notwithstanding) incredibly snappy...

Meanwhile, the Lazy Loaded Member implementation got sped up by 2-3x, which makes /sync roughly 2-3x faster than it would be without Lazy Loading. This hasn't merged yet, but was the main final blocker behind Lazy Loading going live!

🔗matrix-docker-ansible-deploy

Slavi reports:

matrix-docker-ansible-deploy now supports bridging to Telegram by installing tulir's mautrix-telegram bridge. This feature is contributed by @izissise.

In addition, Matrix Synapse is now more configurable from the playbook, with support for enabling stats-reporting, event cache size configurability, password peppering.

🔗Matrix Python SDK needs a maintainer

We should say a huge Thank You to &Adam for his work leading the Python SDK over the previous months! Unfortunately due to a busy home life (best of luck for the second child!) he has decided to step down as lead maintainer. Anyone interested in this project should head to https://github.com/matrix-org/matrix-python-sdk/issues/279, and also come and chat in #matrix-python-sdk:matrix.org.

🔗MatrixToyBots!

A new bot appears! Are you a pedantic academic who likes to correct others' misuse of Latin-derived plurals? This task can now be automated for you by means of SingularBot! Also for people who just like to have some fun. Free PongBot and SmileBot included.

🔗kitsune on Hokkaido island

I ended up being on Hokkaido island right when a major earthquake struck it; so no activity on Matrix from me in the nearest couple of days. Also, donations to GlobalGiving for the disaster relief are welcome because people are really struggling here (abusing the communication channel, sorry).

🔗Matrix Live

...has got delayed again; sorry - we're rather overloaded atm. We'll catch up as soon as we can.