Hi all, Ben here.

Since joining the core team as Developer Advocate last year it's been quite a ride. One of the best things about the job is getting the chance to talk to so many people about their projects and what they would like to see happen in the matrix ecosystem. With so much going on, I just want to say thanks to everyone who has been so welcoming to me and share some of my personal highlights, as I recall them, from 2018!

🔗Clients

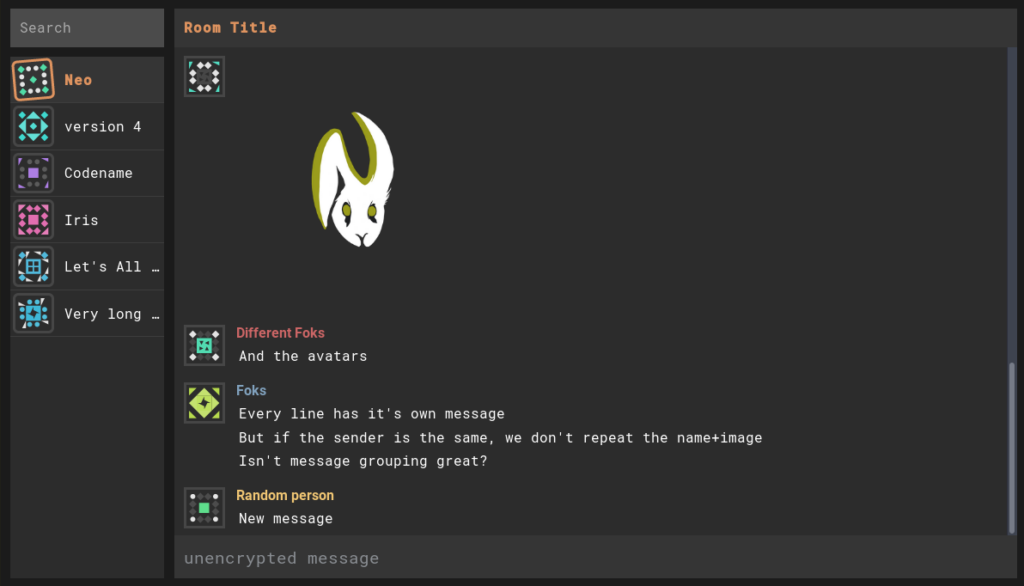

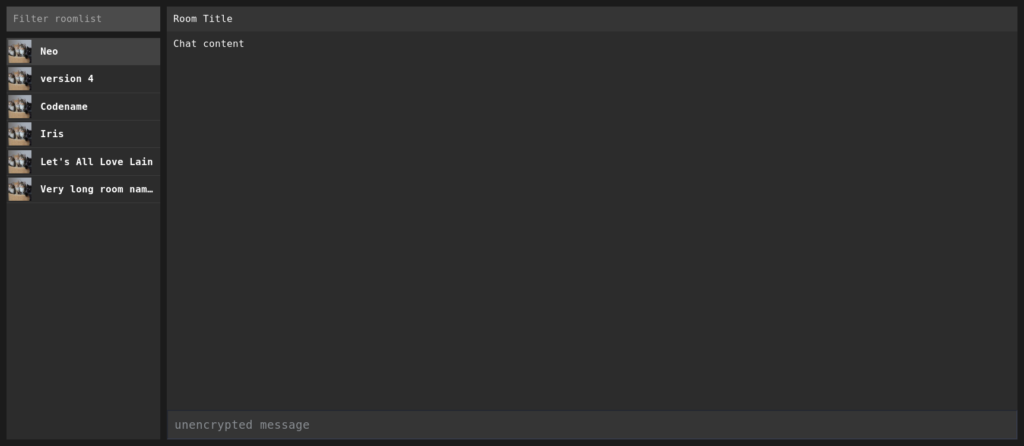

Fractal was featured in the very first TWIM, announcing v1.26. Since then, the team have hosted two IRL hackfest events (Strasbourg and Seville - where to next, Stockholm? Salisbury?), engaged two GSOC students and continued to push out releases. At this point, Fractal is a full-featured Matrix client for GNOME.

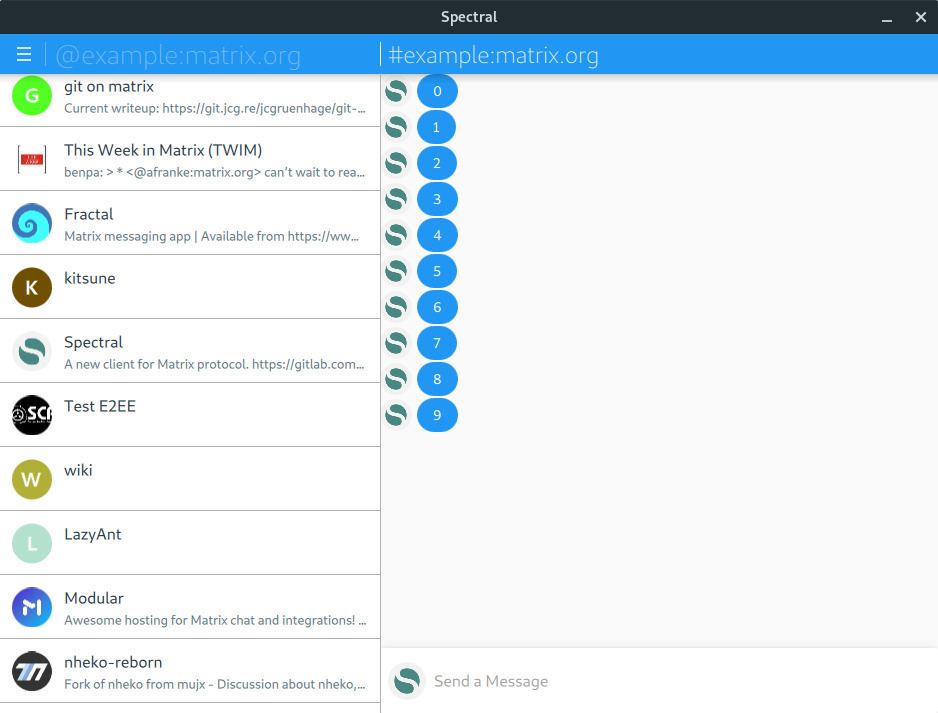

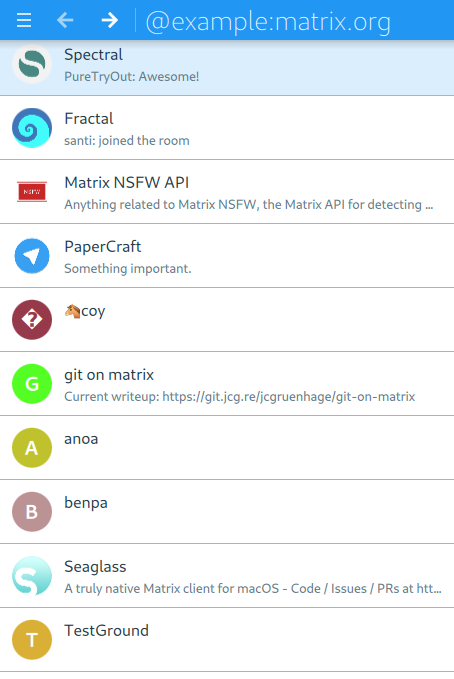

Matrique became Spectral, and is generally awesome. Apparently the name "Matrique" was chosen because it sounds French, but those who speak the language well revealed that this name was not ideal! The project was re-named "Spectral", and is going strong. I really appreciate the multi-user facility! It's a great looking client, and runs great on macOS too (protip: get more attention from /me by providing a macOS build…)

On which subject, Seaglass is a native macOS client. First announced in June, this client supports E2EE rooms (via matrix-ios-sdk), and is also available on homebrew.

Ubuntu Touch has the most Matrix clients per-user of any platform. UT epitomises the resilience and collaborative spirit of Open Source. It's a true community maintenance effort, and is as friendly a community as you might meet. uMatriks came first, but it's FluffyChat that prompted me to install it on my battered old OnePlus One. FluffyChat is now extremely full-featured, with E2EE support being actively discussed.

In the command line, gomuks appeared and quickly became a competent client, but in terms of sheer enthusiasm and momentum, I must give commendation to matrix-client.el, a newly revived mode for Emacs which turns your editor/OS into a great Matrix Client. I enjoyed using it enough that it began to change my mind about using emacs. Laptops have more than 8mb memory these days anyway.

🔗A culture of bots

There is a tendency in the community to build a bot for everything and anything. This has reached the point where there are multiple flairs available depending on what bots you like to make (silly vs serious.)

TravisR was perhaps the first person I saw to get the obsession, creating

and more…

Cadair even made twimbot, designed to make it easier to consume and produce This Week in Matrix itself.

In June tulir started maubot, a plugin-based bot system built in Python, which now also has a management UI.

🔗All bridges lead to Matrix

Or from Matrix, depending on which way you want to send the message.

Around May, I started to notice another obsession brewing in the community. Bridging is a core part of the Matrix mission, but it was around this time I started seeing it in the wild.

Summer 2018 Half-Shot began working in the Matrix core team, and was hugely productive in maintaining and developing the bridge infrastructure for matrix.org. IRC bridging is far more stable and reliable now than it was a year ago. And yet there are still more bridges - too many to list, so I'm picking the ones I've used and enjoyed.

Discord is bridged by matrix-appservice-discord, handled by Half-Shot, aided and abetted by anoa but with a new maintainer this year, Sorunome. This bridge is now feature-rich and sits at v0.3.1.

tulir's suite of bridges including mautrix-telegram and mautrix-whatsapp are extremely stable and useful - big thank you to TravisR for maintaining t2bot.io and hosting the Telegram bridge too.

SMSMatrix, a phone-hosted bridge is simple and works great for SMS bridging.

🔗Libraries, SDKs, Frameworks

I enjoyed using matrix-bot-sdk for building elizabot (more coverage needed for that!), and the SDK recently received support for application services.

In April, kitsune announced v0.2 of libqmatrixclient describing it as “the first one more or less functional and stable" - confidence! This library now powers both Quaternion and Spectral. QMatrixClient has continued to get updates, plus features including lazy loading and VoIP signalling.

There are a few libs I want to pay more attention to this year, starting with tulir's maubot now that it has been rewritten in Python. I'm also excited to see jmsdk, part of ma1uta's broader ecosystem of Matrix tooling - a Java-based SDK.

🔗Ruma Resurrection

Until around June, Ruma was receiving regular updates. There was a pause as the team waited for Rust async/await to land, and also to get some stability in the Matrix Spec. Still waiting on Rust, but now that the Matrix Spec is stabilising, Ruma is showing signs of life too. I have also been watching other homeserver projects begin to restart, which makes for a great start to 2019.

🔗DSN Traveller by Florian

Matrix was featured as part of a Master's thesis by Florian Jacob.

DSN Traveller tries to get a rough overview of how the Matrix network is structured today. It records how many rooms it finds, how many users and servers take part in those rooms, and how they relate to each other, meaning how many users a server has and of how many rooms it is part of.

Florian's thesis was handed in last August. Source code is available.

All details at https://dsn-traveller.dsn.scc.kit.edu/, room at #dsn-traveller:dsn-traveller.dsn.scc.kit.edu.

🔗Still more

Synapse dominates the homeserver space right now, so if you want to host your own homeserver today it's the obvious choice. Too great a variety of installation guides was doing more harm than good, so Stefan took the initiative to create a definitive community-driven Synapse installation guide, including a room to discuss and improve the text. Find the guide linked from here, and chat about the guide in #synapseguide:matrix.org.

I want to use Matrix, and I want to host my own homeserver. As such, matrix-docker-ansible-deploy is a project I absolutely love. It uses Synapse docker images from the Matrix core team, and combines them with Ansible playbooks written and organised by Slavi. It lets you quickly deploy everything needed for a Synapse homeserver, and it's simple enough that even I can use it.

Construct, a homeserver implementation in C++ began successfully federating with Matrix, work progressed from around April/May.

Having a Matrix-native mode for shields.io (those counter/indicator images you often see at the top of repos) seems like something petty at first, but it's actually a great indicator of the importance of Matrix from the outside. Plus, I love seeing the images at the top of different repos. Thanks Brendan for helping this along.

Two students worked on Matrix-related projects during GSOC 2018.

Something which came in super-helpful for me when testing homeserver installations was

f0x's fed-tester.

Source code available (obv.)

🔗Thanks for all the projects

Thanks for a great 2018. There was so much to learn about, so much to write about, and so many great community members to meet and chat to! If I didn't mention your project, I'm sorry to have been either forgetful or to not be able to include everything.

If you think I've missed something, or if there's a project I should have included rather than another, or even if you just disagree with my choices, let's discuss it in #twim:matrix.org. See you there, and let's all parade ahead to a productive, open, interoperable 2019!